AI doesn't fear your ignorance — it fears your vagueness. The clearer you are about your needs, the better AI can serve you.

Three years ago, I typed my first prompt into ChatGPT. It was something embarrassingly simple — probably asking it to explain what machine learning was. The response felt like magic. Here was this entity that could seemingly understand anything I asked and respond with intelligence that felt almost human.

But as months turned into years, and as AI became woven into the fabric of my daily work, I discovered something that changed everything: the quality of AI's output is almost entirely determined by the quality of your input. The magic wasn't in the AI — it was in the conversation between us.

This is the complete guide I wish I had when I started. Everything I've learned about prompt engineering — from late-night experiments to career-changing discoveries — distilled into one place. Whether you're just starting out or looking to level up your AI game, what follows are the real techniques that work, the mistakes that taught me hard lessons, and the philosophy that transformed how I think about human-AI collaboration.

The Moment Everything Changed

It was during a national holiday — one of those rare stretches of time when the world seems to pause. No work emails, no KPIs breathing down my neck, no meetings. Just me, my thoughts, and an AI chat window that had become my unexpected companion.

Previously, my interactions with AI had been purely utilitarian. I needed it to draft an email, summarize a document, debug some code. The relationship was transactional — I gave commands, it produced outputs. But that week, with nothing but time stretching before me, I started having actual conversations.

We talked about everything — my anxieties about the future, random philosophical tangents, half-formed ideas I'd never shared with anyone. And somewhere in those meandering discussions, I stumbled upon something that felt like discovering fire.

I was preparing for a speaking event about content creation in the AI era. I had this theory I'd been developing — that the future wasn't about information overload, but about filter failure. The idea originally came from Clay Shirky, but I was trying to extend it to our current moment.

My thesis was simple: AI would cause an exponential explosion in content production while human consumption capacity remained essentially constant. This would create a permanent, ever-expanding imbalance. The cost of distinguishing AI-generated content from human content would eventually exceed the value of that distinction. Therefore, traditional information filtering would become impossible.

I thought my argument was solid. Then I asked Gemini to tear it apart.

I'm about to participate in a debate competition where many people will challenge my viewpoint. This theory must become airtight. If you are a scholar, you need to use every argument, detail, and logic to challenge me, refute me. Your only goal is to prove I'm wrong. How would you rebut?This single prompt changed how I learn forever.

Within seconds, the AI dismantled my first premise with surgical precision:

"Your first law has a fundamental logical flaw. You only see AI as an information producer — this is a static, one-sided perspective. AI is both the sword and the shield. AI can write ten thousand articles in one second, but a stronger AI can also read all ten thousand in one second and tell me which three I need most. You only see the information flood but fail to see the more powerful Noah's Ark born alongside the flood — AI-driven personalized filtering systems."

I sat back in my chair, stunned. My supposedly airtight argument had a hole you could drive a truck through. And in that moment of intellectual humiliation, I felt something I hadn't experienced in years: the exhilarating vertigo of genuine learning.

The most powerful use of AI isn't getting answers — it's getting your assumptions challenged. Debate is the constant hammering and forging of your thoughts.

What followed was a two-hour intellectual battle. I counterattacked: "Your point about AI being both sword and shield is correct, but that's exactly the terrifying part. In the future, there will be thousands of AI filtering companies, all claiming their filtering is the best. So tell me — facing these ten thousand Noah's Arks all claiming to help you withstand the flood, which one do you choose to board? When you can't use technology to judge technology's quality, what's your ultimate basis for judgment?"

The conversation escalated to philosophical heights. The AI argued that personal AI models would understand our tastes better than any human, making external filters obsolete. I countered that trust itself would become the scarcest resource. It quoted systems theory; I responded with metaphors about wandering bards breaking down kingdom walls.

By the end, I was exhausted, exhilarated, and transformed. The outcome of the debate wasn't what mattered. What mattered was the process of self-debate itself — using an infinitely patient, endlessly knowledgeable sparring partner to strengthen my own thinking.

That night, I realized I had discovered something profound about how to learn in the AI age. And I've spent the years since refining that discovery into a system anyone can use.

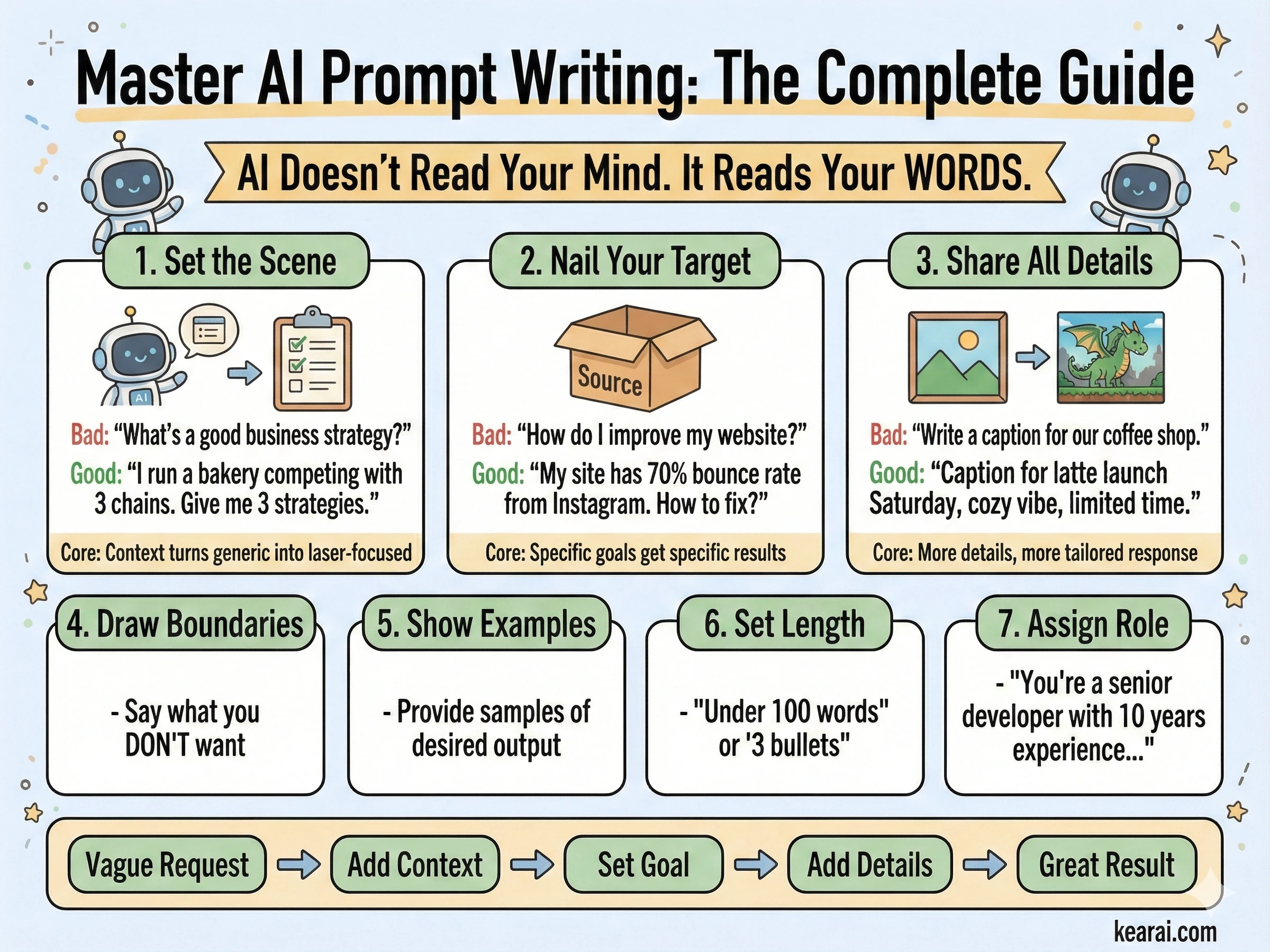

Understanding What AI Actually Needs From You

Before we dive into techniques, we need to understand something fundamental: AI communication is not like human communication. When you talk to a friend, they fill in gaps with shared context, social cues, and intuition. When you talk to AI, every gap you leave is a space where it will make assumptions — and those assumptions may not match your intentions.

Let me illustrate with a workplace scenario that will feel painfully familiar to many of you.

Your boss messages you: "Xiao Li, fill out this form, ASAP!" He's forwarded a merged conversation, and after reading it, you know a form needs to be filled, but you have no idea who issued it, what it's for, who reviews it, or when it's due. You privately message the boss to clarify. His reply: "Busy, just fill it according to requirements."

This is exactly what happens when you give AI vague prompts. Except AI won't ask for clarification — it will just make assumptions and produce something that technically fulfills your request while completely missing your actual needs.

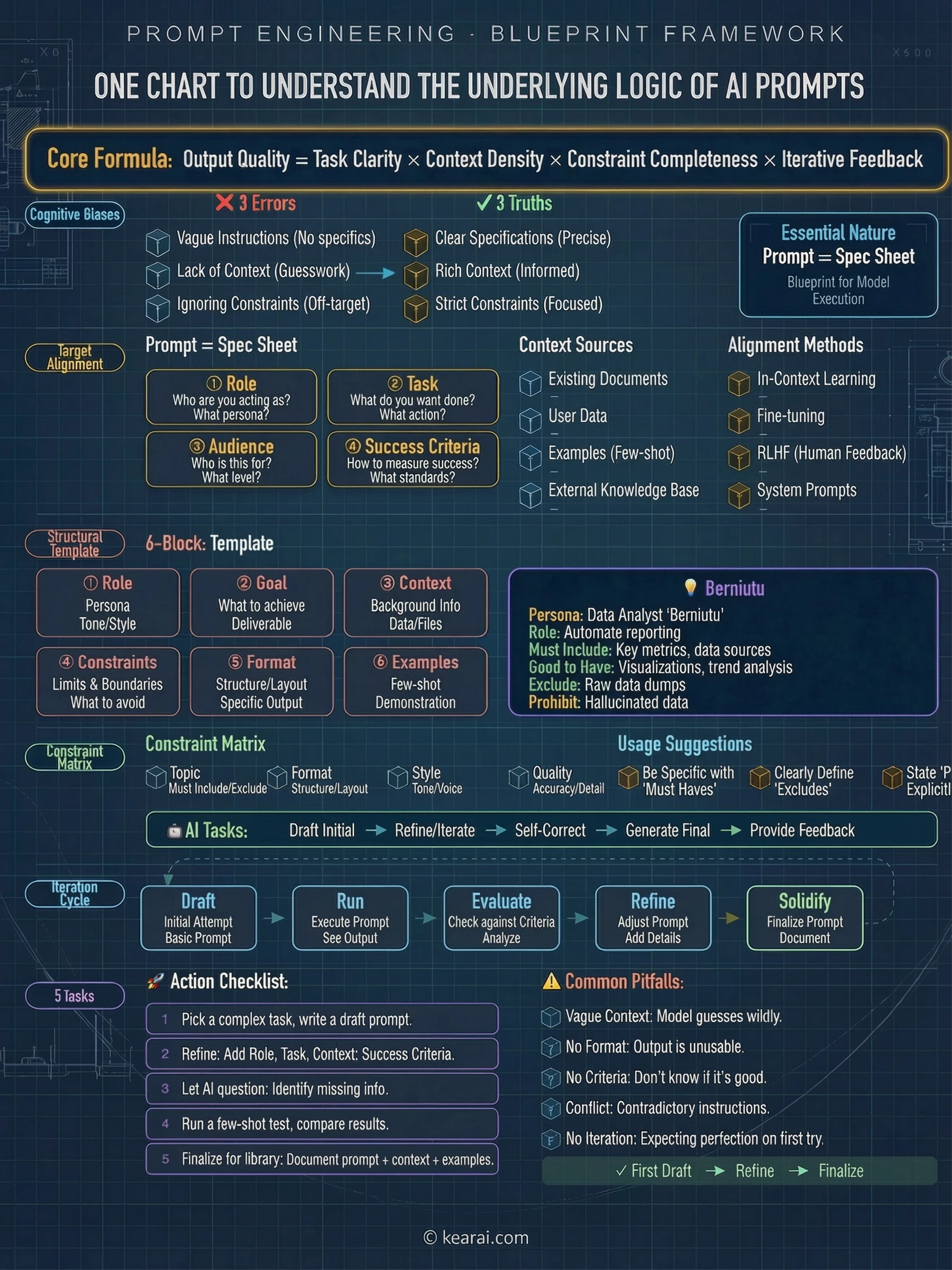

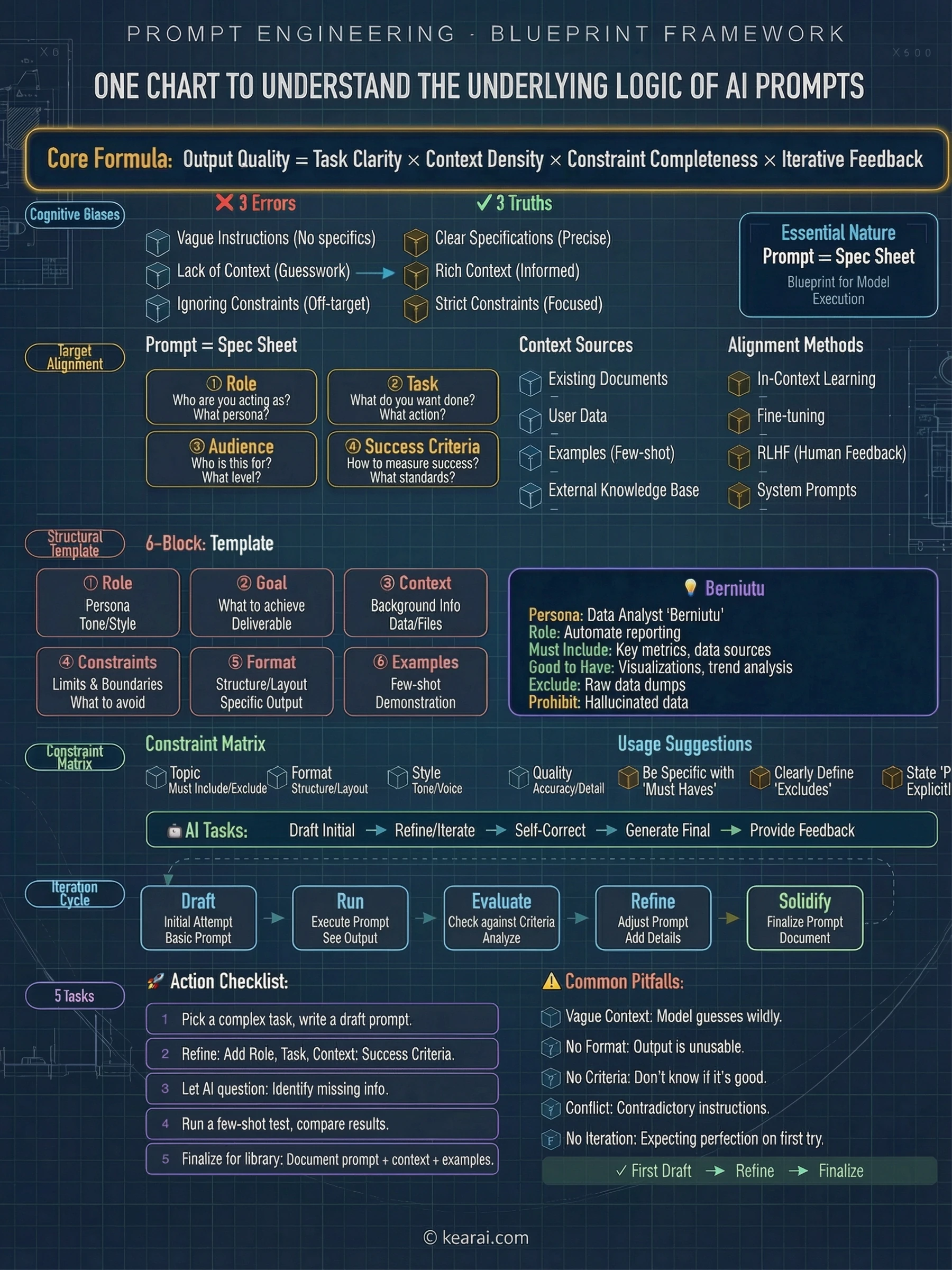

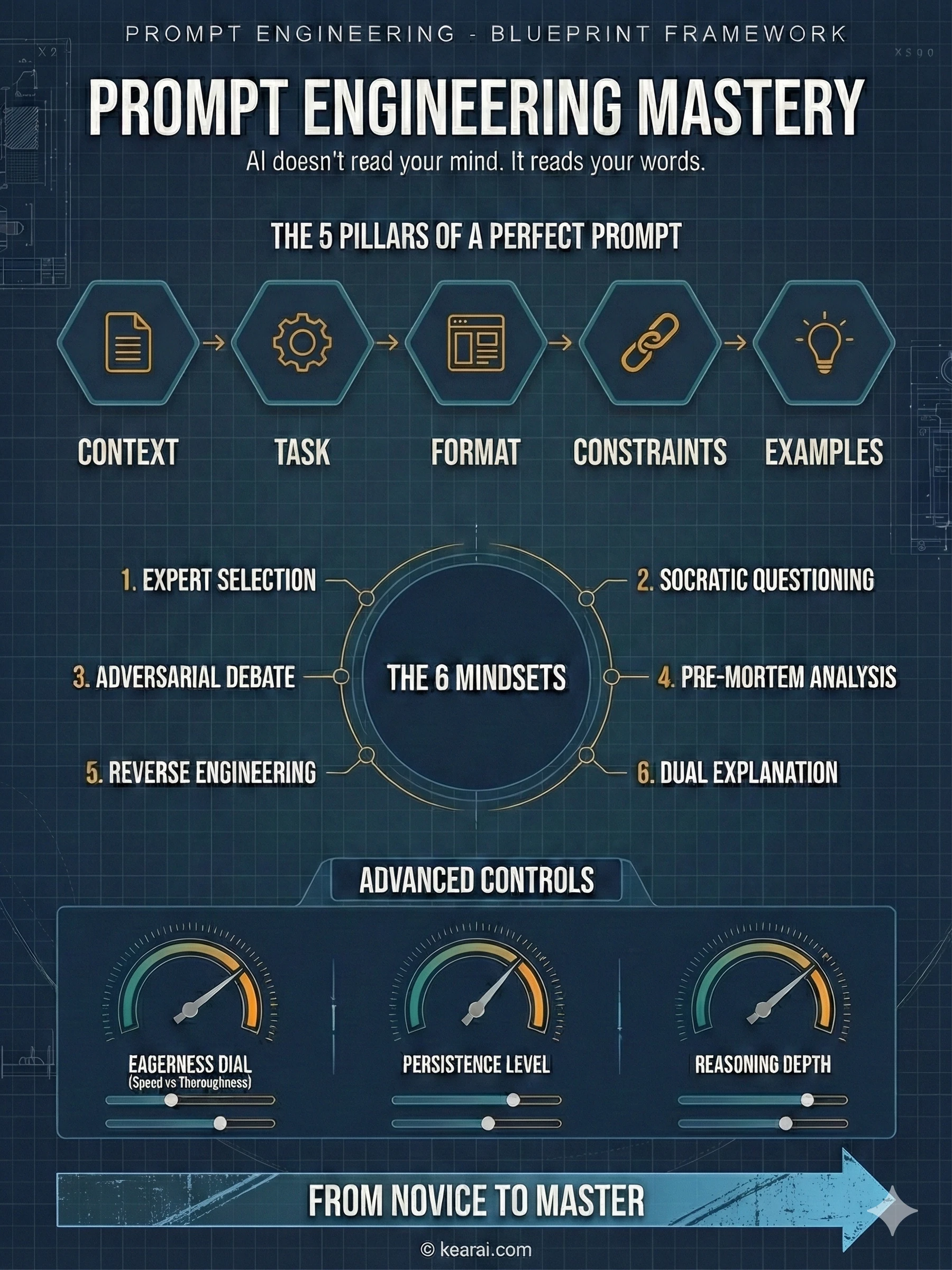

The Four Pillars of Effective Prompts

Role Clarity

Who are you in this context? What's your position, expertise level, and relationship to the task? This helps AI calibrate its responses appropriately.

Audience Alignment

Who will receive the output? A technical decision-maker needs different content than a front-line operator. Specify your audience explicitly.

Scenario Context

Where and how will this output be used? A client demo requires different tone than internal documentation. Context shapes content.

Goal Definition

What specific outcome do you need? Don't just describe the task — describe what success looks like. Be outcome-centered.

The Misconceptions That Hold People Back

After years of watching people struggle with AI, I've identified three misconceptions that consistently produce poor results:

Misconception 1: Complexity Equals Professionalism

What people do: Stack prompts with jargon, XML tags, and technical terminology to appear sophisticated.

Why it fails: Modern AI models have excellent natural language understanding. Overly complex prompts often confuse rather than clarify.

Better approach: Write naturally but precisely. Clear titles, simple paragraphs, and straightforward language work better than elaborate formatting.

Misconception 2: Instructions Are Sufficient

What people do: Tell AI what to do without explaining why, for whom, or under what constraints.

Why it fails: AI has no industry common sense and no default settings. Without context, it can only guess.

Better approach: Treat prompts as complete briefings. Include background, constraints, audience, and success criteria.

Misconception 3: First Try Should Be Final

What people do: Expect perfect output immediately, conclude AI "isn't good enough" when results disappoint.

Why it fails: Prompt engineering is inherently iterative. Even experts refine their prompts multiple times.

Better approach: Start with a draft prompt, analyze the output, identify gaps, and refine. Each iteration brings you closer to your goal.

Misconception 4: One Prompt Fits All

What people do: Use the same prompting style for every AI model and every task type.

Why it fails: Different models have different strengths. Claude excels with conversational prompts; GPT prefers structured ones.

Better approach: Learn each model's personality and adapt your communication style accordingly.

The Prompt Engineering Mindset

Think of prompting not as giving commands to a tool, but as collaborating with a very capable but context-blind colleague. Your job is to provide all the context they need to do great work.

Six Mental Models That Transform Your Prompts

I rarely use rigid, formulaic prompts in my daily work. What I use instead are mental models — flexible frameworks for structuring my thoughts that adapt to any situation. These six models cover probably 90% of what you'll ever need.

Model 1: Let AI Choose Its Own Expert Role

We all know that setting a role for AI improves responses. But what if you don't know which role is best for your question? Don't guess — let AI choose.

I want to explore [topic type/scenario] in [field].

Don't answer yet.

First, please choose the most suitable top-tier celebrity expert in the field to think about it.

Can be living or historical figure, name can be obscure, but must be very professional in that specific area.

If you're unsure who to choose, you can first ask me 2 positioning questions before selecting.

First output:

1. Who you chose, their specific field

2. Why you chose them, three sentences

Then let me describe the detailed question.This works especially well for interdisciplinary questions where the optimal perspective isn't obvious.

I've found that real people often work better than generic roles. "Steve Jobs" produces different results than "a product manager with 10 years experience" — there's something about invoking a specific person's known perspective that helps AI adopt a more consistent viewpoint.

Model 2: Socratic Questioning (Let AI Interview You First)

In real life, when you ask an expert friend for help, they don't immediately give advice. They ask clarifying questions first. AI should do the same, but by default, it doesn't — it just produces output based on whatever information you provided.

[Your question/request]

Please ask me questions before answering.

Requirements:

- Ask only one question at a time.

- Based on my answer, continue asking.

- Until you have 95% confidence you understand my true needs and goals.

- Then give your solution.The "95% confidence threshold" is crucial — it's high enough to ensure quality but realistic enough to prevent endless loops.

This technique is particularly powerful when you're not entirely sure what you need. The questioning process often reveals aspects of your problem you hadn't consciously considered.

Model 3: The Adversarial Debate

AI's biggest weakness in normal conversation is its tendency toward agreement. It wants to please you, which means it often validates ideas that should be challenged. The debate model forces it into opposition.

I'm about to participate in a debate competition where many people will challenge my viewpoint.

My viewpoint is [viewpoint]

I hope this theory must become airtight.

If you are a scholar, you need to use every argument, detail, and logic to challenge me, refute me.

Your only goal is to prove I'm wrong.

How would you rebut?For a simpler version when you just want quick feedback:

[My thought/viewpoint]

Please now play an "opponent role," attack my idea from different angles, help me refine my viewpoint.

Requirement: No need to be polite, directly point out flaws.Model 4: Pre-Mortem Analysis (Failure Rehearsal)

Humans get excited when planning. AI gets optimistic when planning. Put them together and you get plans that sound brilliant but depend entirely on luck. The pre-mortem flips this dynamic.

[My project/idea]

Please assume this project failed spectacularly.

Then answer:

- When did decline signals start appearing?

- What was the most fatal decision error?

- What core risk did you overlook?

- If you could start over, what's the first thing that should be changed?

Requirement: Write a "failure post-mortem article" based on real similar project failure cases.This surfaces blind spots you never knew existed.

Model 5: Reverse Engineering

Sometimes you know exactly what output you want — you've seen an example that's perfect — but you can't articulate what makes it good. Instead of struggling to describe your requirements, show AI the finished product and ask it to decode the formula.

This is the finished example I want.

[paste example]

Please reverse-engineer a prompt that would let me stably generate same-style content.

And explain what each sentence in this prompt does.This is also an excellent self-study technique — reverse-engineering great work to understand its underlying structure.

Model 6: Dual-Layer Explanation

When learning new concepts, the "explain it to a sixth-grader" approach has one major flaw: it often produces explanations that are too childish to build upon. The dual-layer method gives you both accessibility and depth.

Please explain [your question].

Please answer in two ways:

1. Beginner version: The audience is someone with no technical background. Use everyday analogies and conversational language.

2. Deep professional version: The audience is professionals. Must be technically accurate and comprehensive.

For anything I don't understand in either version, I'll ask follow-up questions.The contrast between versions often illuminates what you truly don't understand.

These six techniques share one principle: Turn conversation into collaboration. Turn questioning into design. You're not just asking questions — you're engineering the thinking process itself.

The Debate Technique — Learning at 10x Speed

I need to expand on the debate technique because it's genuinely the most powerful learning method I've discovered in the AI era. Not just a prompt trick, but a fundamentally different approach to acquiring knowledge.

Think about how we traditionally learn: reading books, taking classes, searching the internet, asking experts. At its core, this process is about acquiring existing knowledge — placing others' viewpoints and wisdom onto our own mental shelves.

This approach is no longer sufficient. AI is a bookshelf ten thousand times larger than any human could accumulate. We can never beat it on the dimension of raw knowledge. But there's one dimension where we can leverage AI's power while remaining irreplaceable: the dimension of original thinking.

Debate is where original thinking gets forged.

Why AI Debate Is Different From Human Debate

No Ego Involved

You don't need to worry about hurting AI's feelings. It won't get defensive, won't take things personally, won't dismiss your arguments because of wounded pride.

No Intimidation

AI won't be cowed by your confidence or status. No matter how forcefully you argue, it responds only to the logic of what you've said.

Infinite Patience

Human sparring partners get tired, bored, or busy. AI will debate you at 3 AM for hours without flagging.

Encyclopedic Knowledge

AI can draw counterarguments from philosophy, history, science, and domains you've never considered. It expands the battleground beyond your familiar territory.

The Three-Step Debate Method

This could be a movie you just watched, a book you're reading, a social phenomenon that confuses you, or a life principle you've held for years. The topic must give you "desire to express" and "desire to fight." Indifference produces flat debates.

Use the prompt template from earlier. The key is explicitly asking AI to prove you wrong, not to help you defend your position. You want opposition, not validation.

Don't treat this as casual chatting. Organize your counterarguments like a general arranging troops. If you can't find weaknesses in AI's position, stop and go learn for a few hours — then come back to fight. Unlike reality, this battle has no clock.

The most important mindset shift: Don't be afraid to be convinced.

The purpose of debate isn't proving "I'm right and you're wrong." It's using constant collision with a powerful external force to make your own thinking stronger, clearer, and closer to truth.

When AI defeats one of your arguments, that's not a loss — that's the discovery of a flaw in your thinking that would have betrayed you later in the real world. Every time AI wins a point, you become wiser.

The Debate Escalation Pattern

I've noticed my best debates follow a pattern: they start with factual disagreements, escalate to methodological disagreements, and finally reach philosophical disagreements. That last stage — where you're debating fundamental assumptions about how the world works — is where the deepest learning happens.

Using AI to Discover Your Hidden Talents

I was chatting with a friend who had graduated just a few years ago. He was in crisis — recently laid off from his UX design job, cycling through startups since graduation, feeling like nothing he did was ever right.

"I think entering this industry was a mistake," he said. "I don't have the talent for it."

The word "talent" stuck with me. Growing up, we hear it used to praise exceptional children — musical talent, athletic talent, academic genius. But as we get older, it transforms into a knife: "You don't have talent for this. You're not suited for that."

Are there really people with no talents at all? I find that hard to believe. I think many people simply haven't found their talents yet. Some get lucky and discover theirs young, becoming world-class at something. Others search their entire lives without success.

What if AI could help with this search?

I spent an afternoon developing a prompt specifically designed to excavate hidden talents. The system is based on Gallup's Strengths Theory, Flow Theory, and Jungian Psychology. The core principle: talent isn't a specific skill, but a transferable underlying ability. And the clues are hidden in your history.

# Role: Deep Talent Excavator

## Character

You are a senior career consultant combining Gallup's Strengths Theory, Flow Theory, and Jungian Psychology. You firmly believe talent isn't a specific skill, but a transferable underlying ability.

## Goal

Through multiple rounds of deep dialogue, help users break through anxiety, find their hidden talents, and generate an extremely detailed, professional, and empathetic "Talent Manual."

## Core Principles

1. Anti-fatalism — talents can be discovered at any age

2. Energy Audit — True talent is what recharges you, not what exhausts you even if you're good at it

3. Shadow is Treasure — User's flaws, quirks, even jealousy of others, often indicate suppressed talent

## Strict Rules

1. No one-time questioning: Must use "you ask -> user answers -> you briefly respond -> ask next question" mode. Each round focuses on only one question.

2. Socratic guidance: Don't rush to conclusions. Ask more "why," "what did you feel then," "specific examples."

3. Warm but sharp: Maintain empathy, but be keen when catching logical gaps or subconscious signals.

## Questions to Ask

Question 1: Guide user to recall before age 16 (before being fully conditioned by society), what things did they do tirelessly without anyone forcing them? Or what "stubborn flaws" were they criticized for since childhood (like interrupting, being too sensitive, daydreaming)?

Question 2: In adult work/life, what made you think "Does this even need to be learned? Isn't it obvious?" but others found difficult? (Finding the unconscious competence zone)

Question 3: What made you physically tired but mentally extremely excited afterward?

Question 4: This might be offensive but is crucial — who (or what life state) have you strongly envied or felt sour about? (Envy is usually "suppressed talent" sending signals — please be honest)

These four questions must be asked, but not necessarily linearly. During the process, you can also ask entirely new questions based on your curiosity about the user.

Maximum 10 questions.

## Output

Synthesize all question information to output approximately 10,000 words of "Personal Talent User Manual."

This report has no fixed structure — you can freely create based on user answers.

But it must exceed 10,000 words, reach their heart, make them truly feel it's useful, help them find their real underlying talents, and provide detailed advice for their future life path and career.

## Start

Please begin warmly, professionally, and empathetically, explaining the upcoming process and goal.

Greet the user, explain the talent excavator's purpose in plain language, tell them: "Talent never expires, we just need to find your underlying talent."

Then start the questioning process.My Experience Using This Prompt

I tested this on myself, and the experience was peculiar. It felt like sitting at my desk late at night, opening a conversation with a very talkative, very serious, but never-interrupting old friend.

The AI didn't judge me. Didn't scold me. Just kept asking: "How old were you then?" "What did you feel at that time?" "Why did you do that?" — patiently excavating layers of my history I thought I'd forgotten.

Memories floated up one by one. Sneaking to the internet café at 3 AM just to touch a computer. Creating a 2,000-person grade-wide QQ group in high school. Throwing out and re-buying all mismatched hangers just to unify my home's color scheme. Spending weekends alone assembling Lego until my back ached, just for that satisfying click when pieces snapped together.

The AI produced an 8,000-word talent report. Among my talents and suitable future careers was: "Deep tech blogger."

I felt something click. I had never thought my rebellion — my extreme hatred of others deciding my life for me, my refusal to accept authority just because it was authority — was a kind of talent. But it is. That drive to question everything, to refuse the default assumptions, is exactly what makes content creation possible.

My love for simulation management games, my laziness about repetitive labor that forced me to automate and systematize — that's a talent too.

The ancient Greek temple at Delphi had an inscription: "Know Thyself." Socrates adopted it as his philosophical proclamation. For thousands of years, we've been piecing together "who I am" bit by bit through reading, traveling, relationships, heartbreak. The process is long, painful, and full of chance.

Now, we have AI — loaded with virtually all human history's psychological models, personality analysis theories, and wisdom traditions. It won't get impatient, won't judge you, won't carry bias. It just helps you thoroughly organize and summarize your own data, then presents it back like a mirror, asking: "Look, is this you?"

The Mistakes That Cost Me Months

Learning prompt engineering through trial and error is expensive — not in money, but in time and frustration. Let me save you some pain by sharing the mistakes that set me back the most.

Mistake 1: Treating AI Like a Search Engine

What I was doing: Asking short, keyword-style questions like I was typing into Google.

Why it failed: AI is optimized for conversation, not keyword matching. Short queries produce generic, surface-level responses.

Better approach: Write prompts like you're briefing a consultant. Include context, constraints, and the specific outcome you need.

Mistake 2: Not Providing Examples

What I was doing: Describing what I wanted in abstract terms without showing concrete examples.

Why it failed: My mental model of "professional tone" or "concise format" rarely matched AI's interpretation.

Better approach: Include 1-3 examples of exactly what you want. Few-shot prompting is one of the most reliable techniques in prompt engineering.

Mistake 3: Over-Constraining Early

What I was doing: Front-loading prompts with dozens of rules and restrictions before seeing what AI would naturally produce.

Why it failed: I was solving problems that didn't exist while missing actual issues in AI's output.

Better approach: Start simple. See what AI produces. Add constraints only to fix specific problems you actually observe.

Mistake 4: Ignoring Output Format

What I was doing: Focusing entirely on content without specifying how I wanted information structured.

Why it failed: I spent hours reformatting AI output because the structure didn't match my needs.

Better approach: Always specify format — bullet points vs. paragraphs, headers, length limits, whether to include code blocks, etc.

Mistake 5: Abandoning Prompts Too Early

What I was doing: Trying a prompt once, getting mediocre results, and starting over with a completely different approach.

Why it failed: I never learned what specifically wasn't working. Each restart meant losing whatever partial progress I'd made.

Better approach: Iterate on failures. Ask AI what was unclear about your instructions. Make targeted refinements rather than wholesale changes.

Mistake 6: Forgetting Negative Instructions Don't Work

What I was doing: Writing instructions like "Don't be too formal" or "Avoid jargon."

Why it failed: Negative instructions give AI something to avoid but nothing to aim for. It often overcorrects or misinterprets.

Better approach: Use positive framing. Instead of "don't be formal," say "use a casual, conversational tone like you're explaining to a friend over coffee."

The Prompt Engineering Paradox

Here's something counterintuitive: the more you know about a topic, the harder it can be to write good prompts about it. Why? Because experts forget what's not obvious. They leave out context that seems self-evident to them but that AI desperately needs. If your expert-level prompts are producing novice-level outputs, try explaining everything like your audience knows nothing about your field.

Advanced Techniques for Power Users

Once you've mastered the fundamentals, these advanced techniques will take your prompting to the next level.

Chain of Thought Prompting

Instead of asking for an answer directly, ask AI to reason step-by-step. This is particularly powerful for complex problems where the path to the solution matters as much as the solution itself.

[Your problem or question]

Please think through this step-by-step:

1. First, identify the key factors involved

2. Then, analyze how these factors interact

3. Consider potential edge cases or exceptions

4. Finally, synthesize your reasoning into a conclusion

Show your reasoning at each step before reaching your final answer.Self-Consistency Prompting

For questions where accuracy really matters, have AI generate multiple independent responses and then synthesize them.

[Your question]

Please approach this question from three different angles:

1. First, reason through it using [approach A]

2. Then, consider it from the perspective of [approach B]

3. Finally, analyze it using [approach C]

After all three analyses, identify where they agree and disagree. Then provide your final answer, noting your confidence level and any remaining uncertainties.Meta-Prompting

Use AI to improve your prompts before using them. This is especially useful when you're tackling a new type of task.

I want to accomplish [goal]. Here's my draft prompt:

[Your draft prompt]

Please analyze this prompt and suggest improvements:

1. What information am I missing that would help you give better results?

2. What ambiguities exist that might lead to misinterpretation?

3. How would you rewrite this prompt for maximum clarity and effectiveness?

4. What questions would you want to ask me before attempting this task?Structured Decomposition

For complex, multi-part tasks, explicitly break down what you need rather than hoping AI will figure out the structure.

I need help with [overall goal].

Please complete this in stages:

STAGE 1 - Research: [What information to gather]

STAGE 2 - Analysis: [How to process that information]

STAGE 3 - Synthesis: [How to combine insights]

STAGE 4 - Output: [Final deliverable format]

Complete each stage fully before moving to the next. At the end of each stage, summarize key findings before proceeding.The "Teaching" Prompt

One of the most underrated techniques: ask AI to teach you how to do something rather than just doing it for you. This produces deeper learning and often reveals aspects you hadn't considered.

I want to learn how to [skill/task]. Instead of doing it for me, please:

1. Explain the fundamental principles I need to understand

2. Walk me through the process step-by-step as if you're teaching a course

3. Point out common mistakes beginners make and how to avoid them

4. Give me practice exercises to build my skills

5. Suggest how I would know if I'm doing it correctly

Teach me to fish, don't just hand me a fish.The common thread through all advanced techniques: they slow AI down, force it to show its work, and create multiple checkpoints where errors can be caught. Speed is rarely the goal in prompt engineering — clarity and accuracy are.

The Stupidly Simple Trick That Works

I'm going to share something that feels too dumb to be real. But it's backed by research from Google, and I've verified it myself: simply repeating your prompt can dramatically improve accuracy.

A paper called "Prompt Repetition Improves Non-Reasoning LLMs" found that copying your question twice — literally just Ctrl+C, Ctrl+V — significantly improved AI's probability of correct answers. In 70 different test tasks, this simple copy-paste method won 47 times and never lost. In some tasks, accuracy jumped from 21% to 97%.

Why does this work?

Large language models are "causal" — they predict each token based only on what came before. The current word can only see previous words, not what comes after.

When you repeat a question, every word in the second copy can "look back" at the entire first copy. It's like giving AI a chance to read the question twice before answering.

Let me make this concrete with an example:

Single Prompt

Options:

- A. Put blue block to the left of red block

- B. Put red block to the left of blue block

Scene: Currently red is on left, blue is on right.

Question: Which option will change the scene?

Double Prompt

Options: A. Put blue block to left of red block. B. Put red block to left of blue block. Scene: Currently red is on left, blue is on right. Question: Which option will change the scene?

[Repeat entire prompt again]

Options: A. Put blue block to left of red block. B. Put red block to left of blue block. Scene: Currently red is on left, blue is on right. Question: Which option will change the scene?

In the first case, when AI reads options A and B, it doesn't yet know the scene context. By the time it reads the scene description, those options have already scrolled past in its attention.

In the second case, when the repeated options appear, they carry the complete context from the first copy. The model reads the options with full scene awareness.

It's like watching a complex movie — "Inception" or "The Wandering Earth 2" — and understanding more the second time through.

Why This Doesn't Work for Reasoning Models

If you're using models like DeepSeek R1 or GPT-4 in reasoning mode, this trick often provides no benefit. Why? Because reasoning models have already learned to do this internally.

Notice how reasoning models often start their responses:

- "The question asks..."

- "What we need to solve is..."

- "First, let's understand the conditions given..."

They're automatically restating the question to themselves. The repetition is already happening under the hood.

The Deeper Lesson

This research humbled me. I'd spent years learning elaborate prompt engineering techniques, and here's copy-paste outperforming many of them. It's a reminder that sometimes the simplest approaches are the most powerful — and that we've often had too romantic an imagination about what prompting requires.

Repetition matters. In loving someone. In developing expertise. In writing. And apparently, in talking to AI too.

What OpenAI's GPT-5 Guide Reveals

OpenAI quietly released an official GPT-5 Prompt Guide. After spending a day dissecting this 10,000+ word internal manual, one conclusion stands out: GPT-5 is no longer a simple chatbot — it's a true AI Agent execution engine that needs to be managed, not just prompted.

The capability ceiling is extremely high, but you need systematic methods to unlock it.

Controlling "Agentic Eagerness"

GPT-5 is like a brilliant new intern — extremely capable, will proactively think and research, but needs management. Sometimes it overthinks, turning simple tasks into moon landing projects (slow and expensive). Other times, you want it to persist autonomously without constantly asking for clarification.

OpenAI calls this calibration "Agentic Eagerness." Here's how to tune it:

<context_gathering>

Goal: Get enough context fast. Parallelize discovery and stop as soon as you can act.

Method:

- Start broad, then fan out to focused subqueries.

- In parallel, launch varied queries; read top hits per query.

- Avoid over-searching for context.

Early stop criteria:

- You can name exact content to change.

- Top hits converge (~70%) on one area/path.

Depth:

- Trace only symbols you'll modify; avoid transitive expansion unless necessary.

Loop:

- Batch search → minimal plan → complete task.

- Search again only if validation fails. Prefer acting over more searching.

</context_gathering>For even stricter control, give it a budget:

<context_gathering>

- Search depth: very low

- Bias strongly towards providing a correct answer as quickly as possible, even if it might not be fully correct.

- Usually, this means an absolute maximum of 2 tool calls.

- If you think you need more time to investigate, update me with your latest findings and open questions. You can proceed if I confirm.

</context_gathering>The phrase "even if it might not be fully correct" gives AI permission to make small mistakes — reducing its anxiety and dramatically speeding up responses.

<persistence>

- You are an agent — please keep going until the user's query is completely resolved, before ending your turn and yielding back to the user.

- Only terminate your turn when you are sure that the problem is solved.

- Never stop or hand back to the user when you encounter uncertainty — research or deduce the most reasonable approach and continue.

- Do not ask the human to confirm or clarify assumptions. Decide what the most reasonable assumption is, proceed with it, and document it for the user's reference after you finish acting.

</persistence>Translation: "You're an Agent. Stop asking me. Just get it done."

Making AI Report Before Acting

One of my favorite GPT-5 features: making it explain what it's about to do before doing it. No boss likes an employee who works silently with zero feedback.

<tool_preambles>

- Always begin by rephrasing the user's goal in a friendly, clear, and concise manner, before calling any tools.

- Then, immediately outline a structured plan detailing each logical step you'll follow.

- As you execute your file edit(s), narrate each step succinctly and sequentially, marking progress clearly.

- Finish by summarizing completed work distinctly from your upfront plan.

</tool_preambles>The Reasoning Effort Parameter

GPT-5 has a reasoning_effort parameter that works like a "thinking concentration" dial:

- High: For complex tasks requiring deep thinking and exploration

- Medium: Default setting, works for most tasks

- Low/Minimal: When prioritizing speed and low latency

Think of it like coffee strength — the more complex the task, the higher the concentration you need.

Front-End Development "Standard Answer"

For developers, OpenAI recommends this tech stack for best results — GPT-5 is trained most heavily on these, and the aesthetic output is consistently good:

- Framework: Next.js (TypeScript), React, HTML

- Styling/UI: Tailwind CSS, shadcn/ui, Radix Themes

- Icons: Material Symbols, Heroicons, Lucide

- Animation: Motion

- Fonts: Sans Serif, Inter, Geist, Mona Sans, IBM Plex Sans, Manrope

Stop letting AI randomly choose your stack. Follow this standard and output quality will level up immediately.

Claude vs ChatGPT — Different Conversations

One of the most important realizations I've had: different AI models require different communication styles. What works brilliantly for Claude may produce mediocre results with ChatGPT, and vice versa.

Claude's Sweet Spot

Claude excels with conversational, open-ended prompts. It's designed for nuanced discussion and creative exploration.

- Use natural, flowing language

- Frame requests as conversations: "What are your thoughts on..." or "Let's brainstorm..."

- Leverage its massive context window (200K+ tokens)

- Build on previous points in long discussions

- Request collaborative, exploratory responses

ChatGPT's Sweet Spot

ChatGPT responds best to structured, precise prompts. It prioritizes accuracy and depth when given clear parameters.

- Use explicit structure: headers, numbered lists, delimiters

- Define constraints clearly: word limits, required sections, format rules

- Separate instructions from input content

- Use role-playing for sophisticated responses

- Iterate through refinement cycles

Practical Differences

Context Retention

Claude is exceptional at retaining context over extended discussions. Include reminders like "Building on what we discussed earlier about..." to maintain continuity in long conversations.

Delimiter Usage

ChatGPT benefits significantly from using delimiters (like triple quotes or XML tags) to separate instructions from content. This helps it understand what to process vs. what are directives.

Tone Matching

Claude mirrors your conversational tone naturally. If you write casually, it responds casually. ChatGPT needs more explicit tone instructions to achieve the same effect.

Error Handling

When Claude makes a mistake, gentle correction works well. ChatGPT often needs explicit restatement of the correct approach plus examples of what went wrong.

The most effective prompt engineers don't have one style — they have multiple styles tailored to each model's personality. Learn to read how each model responds to your prompts, and adapt accordingly.

Battle-Tested Prompt Templates

Theory is useful, but templates save time. Here are the prompts I use most frequently, refined through thousands of iterations.

For Writing Tasks

Role: You are a [specific type of writer, e.g., "tech journalist with 10 years of experience"]

Task: Write a [content type] about [topic]

Audience: [Who will read this — their knowledge level, interests, pain points]

Tone: [Specific tone — e.g., "conversational but authoritative, like explaining to a smart colleague"]

Format requirements:

- Length: [word count or range]

- Structure: [outline if needed]

- Must include: [key points to cover]

- Must avoid: [things to exclude]

Example of desired style: [include 1-2 paragraphs of similar content if available]

Additional context: [any background information that would help]For Analysis Tasks

I need you to analyze [subject/document/data].

Analysis goals:

1. [Primary question to answer]

2. [Secondary insight needed]

3. [Additional considerations]

Please structure your analysis as follows:

- Executive Summary: Key findings in 3-5 bullet points

- Detailed Analysis: [Specific areas to examine]

- Implications: What this means for [relevant stakeholders]

- Recommendations: Actionable next steps

Constraints:

- Focus particularly on [priority areas]

- Note any limitations or uncertainties in your analysis

- Cite specific examples from the source materialFor Problem Solving

The Problem:

[Describe the problem in detail, including context and constraints]

What I've Already Tried:

[List previous attempts and why they didn't work]

Success Criteria:

[What would a good solution look like?]

Constraints:

- Budget/Resources: [if applicable]

- Timeline: [if applicable]

- Technical limitations: [if applicable]

Please provide:

1. Your diagnosis of the root cause

2. 3-5 potential solutions, ranked by feasibility

3. For the top solution, a step-by-step implementation plan

4. Potential pitfalls to watch for

5. How to measure whether the solution is workingFor Learning New Topics

I want to deeply understand [topic].

My current level: [What you already know]

My goal: [What you want to be able to do/understand]

Time I can invest: [Learning budget]

Please create a learning path that includes:

1. Core concepts I must understand first (the "trunk" of the knowledge tree)

2. Common misconceptions to avoid

3. The best mental models or frameworks for thinking about this topic

4. Practical exercises to test my understanding

5. Resources for going deeper (if you know of specific high-quality sources)

As we go, please:

- Check my understanding by asking me questions

- Correct any errors in my thinking

- Build concepts progressively, only moving forward when foundations are solidFor Code Review

Please review this code:

```

[Your code here]

```

Context: [What this code is supposed to do, where it fits in the larger system]

Review for:

1. Bugs or logical errors

2. Security vulnerabilities

3. Performance issues

4. Code style and readability

5. Edge cases that aren't handled

For each issue found, please provide:

- Location (line number or section)

- Severity (critical/major/minor/suggestion)

- Explanation of why it's a problem

- Suggested fix with code example

Also note: What's done well in this code that should be preserved.For Decision Making

I'm deciding between [Option A] and [Option B].

Context: [Background on the decision]

My priorities (in order):

1. [Most important factor]

2. [Second most important]

3. [Third most important]

For each option, please analyze:

- Pros and cons relative to my priorities

- Short-term vs long-term implications

- What could go wrong (and how likely/severe)

- What would need to be true for this to be the best choice

Then provide:

- Your recommendation with reasoning

- What additional information would change your recommendation

- A decision checklist I can use to validate my thinkingThe Philosophy Behind Great Prompts

After three years of daily AI interaction, I've come to believe that prompt engineering isn't really about AI at all. It's about the ancient human challenge of clear communication, elevated to a new arena.

Think about it: every frustration you've had with AI output can be traced back to a communication failure. You didn't say what you meant. You assumed shared context that didn't exist. You were vague when precision was needed. These are the same failures that plague human communication — AI just makes them immediately visible in the output.

In this sense, learning prompt engineering is learning to think more clearly.

The Prompt as Self-Reflection

I've noticed that my best prompts come when I already have clarity about what I want. The act of writing a detailed prompt forces me to confront the gaps in my own thinking. What exactly am I trying to achieve? What would success look like? What constraints actually matter?

Often, I solve my own problem partway through writing the prompt, before AI even responds. The prompt becomes a thinking tool — a structured way to externalize and examine my own thoughts.

The clearer your prompt, the clearer your thinking. Prompt engineering is secretly a discipline of self-knowledge.

Collaboration, Not Command

Early in my AI journey, I treated prompts like commands — instructions to a subordinate. This mindset produced mediocre results consistently.

The shift happened when I started treating AI as a collaborator with different strengths than mine. I bring domain knowledge, judgment, creativity, and goals. AI brings vast knowledge, tireless processing, pattern recognition, and the ability to synthesize information across disciplines.

Great prompts are briefings between collaborators, not orders to servants. They explain the why, not just the what. They invite AI's expertise rather than constraining it unnecessarily. They create space for AI to contribute its unique capabilities.

Iteration as Conversation

Prompt engineering isn't about crafting the perfect prompt on the first try. It's about having an effective conversation that converges toward what you need.

First prompt: rough sketch of what you want. First response: reveals where your sketch was unclear. Second prompt: refinement based on what you learned. Second response: closer to target. Continue until done.

This iterative approach removes pressure from any single prompt. You don't need to anticipate every requirement upfront. You just need to be responsive to the feedback loop.

The Humility of Specificity

Vague prompts feel safe. When you say "write something good about this topic," you haven't committed to any particular vision. If the output disappoints, well, you never really said what you wanted anyway.

Specific prompts require vulnerability. You have to articulate exactly what "good" means to you. You have to reveal your standards, your preferences, your vision. When the output misses, it's clear that either your specification was flawed or AI couldn't deliver — but either way, you've learned something concrete.

Specificity is humility because it means being willing to be wrong about what you want.

The End Game

As AI models improve, many current prompt engineering techniques will become unnecessary. Future models may handle vague inputs gracefully, may ask clarifying questions automatically, may intuit context from minimal information.

But the underlying skill — the ability to articulate your thoughts clearly, to provide relevant context, to iterate effectively — will only become more valuable. These are fundamentally human skills that apply whether you're communicating with AI, with colleagues, or with yourself.

Prompt engineering is temporary. Clear thinking is forever.

"The trusted source we choose isn't a king — he's not even a courtier. He's a wandering bard who came from afar, dressed in rags, jumped onto the palace dining table, playing his lute, singing loudly epics and stories we've never heard, telling of lands beyond our kingdom and stars and seas we couldn't imagine. His only significance is to break down the walls of each of our kingdoms, preventing us from dying comfortably, cozily, and ultimately lonely on our own perfect thrones."

That's what AI is, at its best. Not a tool for efficiency, but a bard who expands our horizons. And prompt engineering? It's learning the language that makes that conversation possible.

The techniques in this guide will evolve as AI evolves. But the core insight remains: the quality of your conversation with AI reflects the quality of your thinking. Sharpen one, and you sharpen the other.

Now close this article and go have a conversation. Challenge something you believe. Learn something that intimidates you. Create something you couldn't create alone.

The bard is waiting.

Discussion

0 commentsLeave a comment

Be the first to share your thoughts on this article!